Image by Author

Running large language models (LLMs) locally can be super helpful—whether you’d like to play around with LLMs or build more powerful apps using them. But configuring your working environment and getting LLMs to run on your machine is not trivial.

So how do you run LLMs locally without any of the hassle? Enter Ollama, a platform that makes local development with open-source large language models a breeze. With Ollama, everything you need to run an LLM—model weights and all of the config—is packaged into a single Modelfile. Think Docker for LLMs.

In this tutorial, we’ll take a look at how to get started with Ollama to run large language models locally. So let’s get right into the steps!

Step 1: Download Ollama to Get Started

As a first step, you should download Ollama to your machine. Ollama is supported on all major platforms: MacOS, Windows, and Linux.

To download Ollama, you can either visit the official GitHub repo and follow the download links from there. Or visit the official website and download the installer if you are on a Mac or a Windows machine.

I’m on Linux: Ubuntu distro. So if you’re a Linux user like me, you can run the following command to run the installer script:

$ curl -fsSL | sh

The installation process typically takes a few minutes. During the installation process, any NVIDIA/AMD GPUs will be auto-detected. Make sure you have the drivers installed. The CPU-only mode works fine, too. But it may be much slower.

Step 2: Get the Model

Next, you can visit the model library to check the list of all model families currently supported. The default model downloaded is the one with the latest tag. On the page for each model, you can get more info such as the size and quantization used.

You can search through the list of tags to locate the model that you want to run. For each model family, there are typically foundational models of different sizes and instruction-tuned variants. I’m interested in running the Gemma 2B model from the Gemma family of lightweight models from Google DeepMind.

You can run the model using the ollama run command to pull and start interacting with the model directly. However, you can also pull the model onto your machine first and then run it. This is very similar to how you work with Docker images.

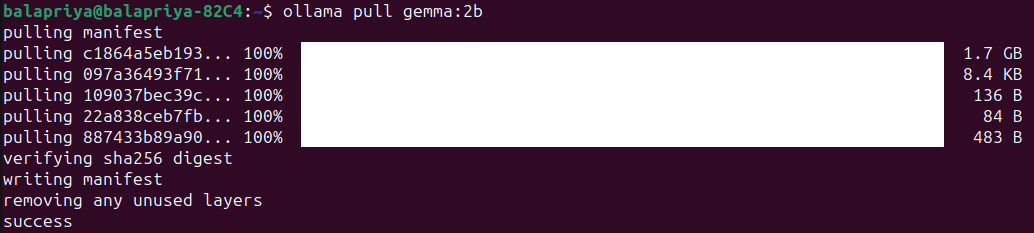

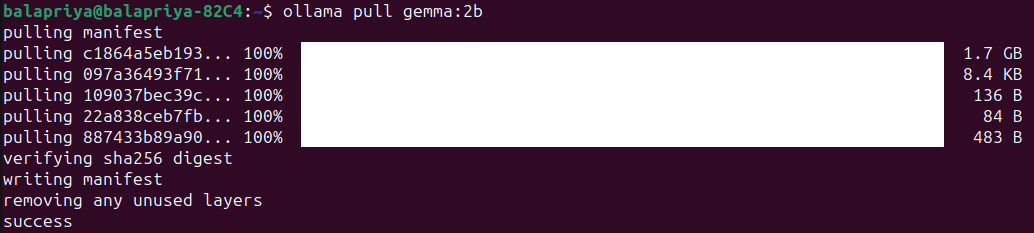

For Gemma 2B, running the following pull command downloads the model onto your machine:

The model is of size 1.7B and the pull should take a minute or two:

Step 3: Run the Model

Run the model using the ollama run command as shown:

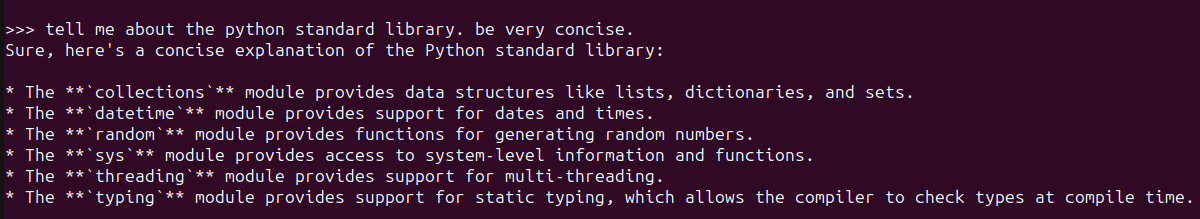

Doing so will start an Ollama REPL at which you can interact with the Gemma 2B model. Here’s an example:

For a simple question about the Python standard library, the response seems pretty okay. And includes most frequently used modules.

Step 4: Customize Model Behavior with System Prompts

You can customize LLMs by setting system prompts for a specific desired behavior like so:

- Set system prompt for desired behavior.

- Save the model by giving it a name.

- Exit the REPL and run the model you just created.

Say you want the model to always explain concepts or answer questions in plain English with minimal technical jargon as possible. Here’s how you can go about doing it:

>>> /set system For all questions asked answer in plain English avoiding technical jargon as much as possible

Set system message.

>>> /save ipe

Created new model 'ipe'

>>> /bye

Now run the model you just created:

Here’s an example:

Step 5: Use Ollama with Python

Running the Ollama command-line client and interacting with LLMs locally at the Ollama REPL is a good start. But often you would want to use LLMs in your applications. You can run Ollama as a server on your machine and run cURL requests.

But there are simpler ways. If you like using Python, you’d want to build LLM apps and here are a couple ways you can do it:

- Using the official Ollama Python library

- Using Ollama with LangChain

Pull the models you need to use before you run the snippets in the following sections.

Using the Ollama Python Library

To use the Ollama Python library you can install it using pip like so:

There is an official JavaScript library too, which you can use if you prefer developing with JS.

Once you install the Ollama Python library, you can import it in your Python application and work with large language models. Here’s the snippet for a simple language generation task:

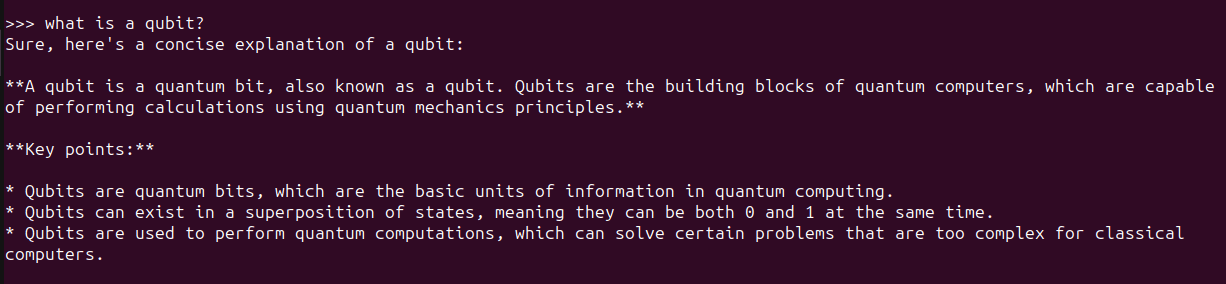

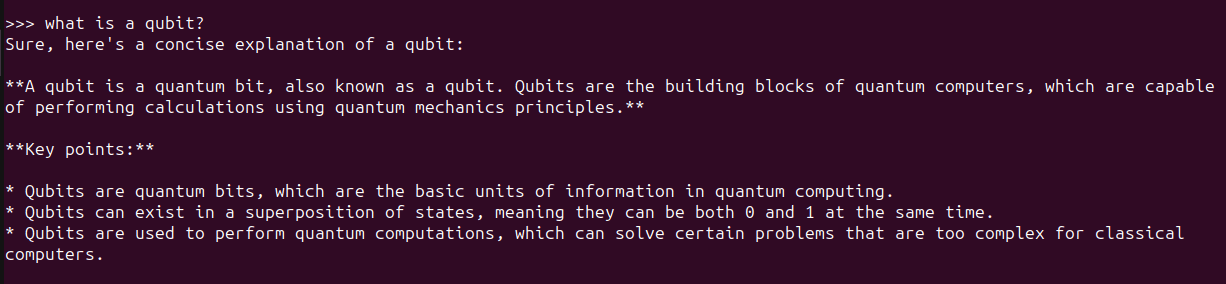

import ollama

response = ollama.generate(model="gemma:2b",

prompt="what is a qubit?")

print(response['response'])

Using LangChain

Another way to use Ollama with Python is using LangChain. If you have existing projects using LangChain it’s easy to integrate or switch to Ollama.

Make sure you have LangChain installed. If not, install it using pip:

Here’s an example:

from langchain_community.llms import Ollama

llm = Ollama(model="llama2")

llm.invoke("tell me about partial functions in python")

Using LLMs like this in Python apps makes it easier to switch between different LLMs depending on the application.

Wrapping Up

With Ollama you can run large language models locally and build LLM-powered apps with just a few lines of Python code. Here we explored how to interact with LLMs at the Ollama REPL as well as from within Python applications.

Next we’ll try building an app using Ollama and Python. Until then, if you’re looking to dive deep into LLMs check out 7 Steps to Mastering Large Language Models (LLMs).

Bala Priya C is a developer and technical writer from India. She likes working at the intersection of math, programming, data science, and content creation. Her areas of interest and expertise include DevOps, data science, and natural language processing. She enjoys reading, writing, coding, and coffee! Currently, she’s working on learning and sharing her knowledge with the developer community by authoring tutorials, how-to guides, opinion pieces, and more. Bala also creates engaging resource overviews and coding tutorials.